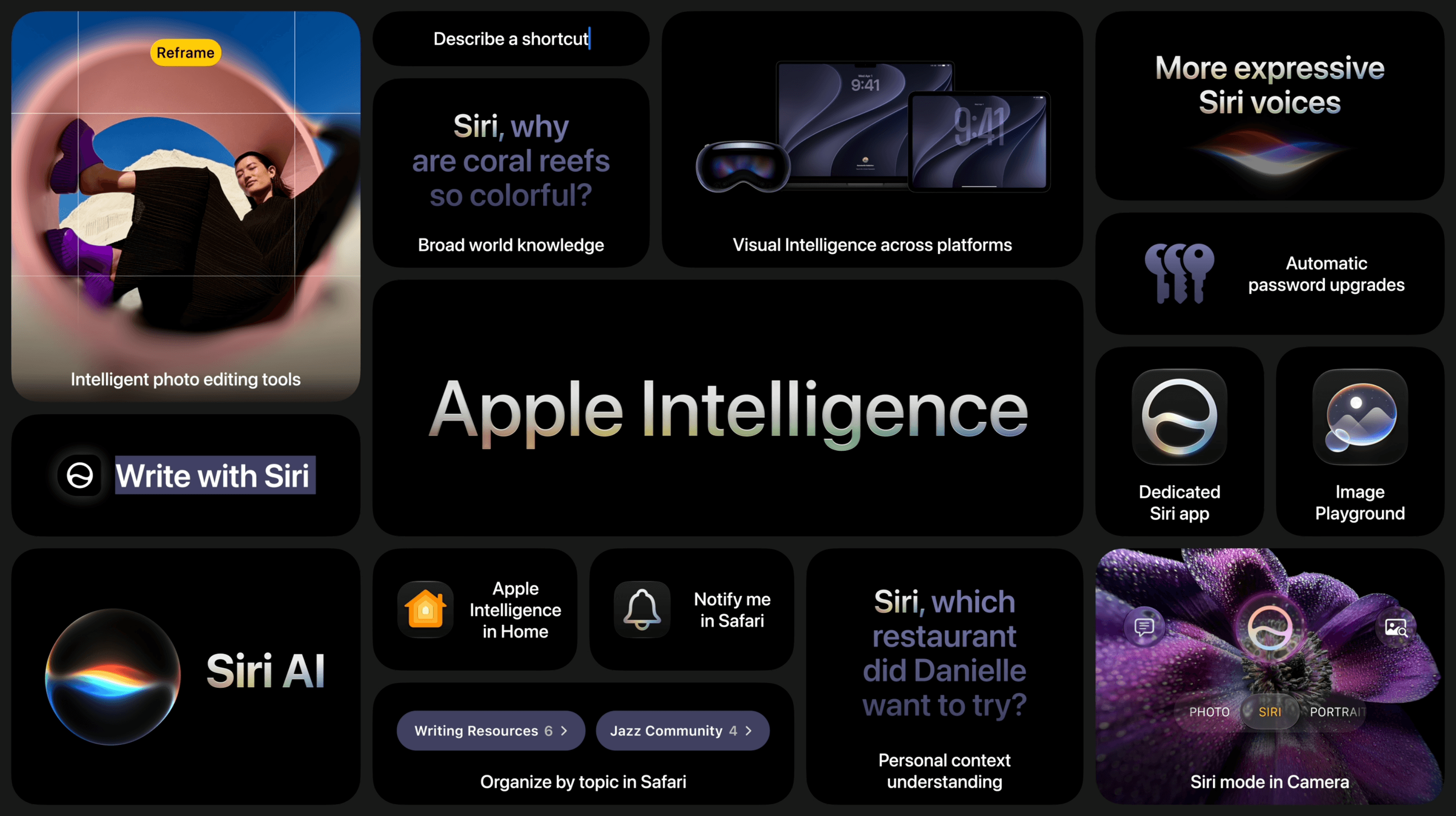

During this year’s WWDC keynote, Apple announced improvements to icons for all of its first-party apps. The company says that by “integrating additional layers of Liquid Glass directly into the icon artwork itself,” icons now “appear sharper and more defined.”

It’s certainly a noticeable improvement, and unsurprisingly, Basic Apple Guy is all over the changes with an excellent side-by-side comparison of all the icon changes in macOS Golden Gate. (Many of these icons carry over to other platforms, of course.)

The clarity and legibility of almost all the icons have improved significantly, with icons like Photos packing a real visual punch. Additionally, viewing the icons at this size, you can see the nice refraction effects of the glass-like elements.

The one that really stood out for me, though, was the new Finder icon, which not only looks cleaner but also has a subtle change to the nose and the curve of the divide, bringing it much closer to the classic Finder icon from the pre-Liquid Glass days.

What can I say? I’m a stickler for classic design.

You can follow all of our WWDC coverage through our WWDC 2026 hub or subscribe to the dedicated WWDC 2026 RSS feed.