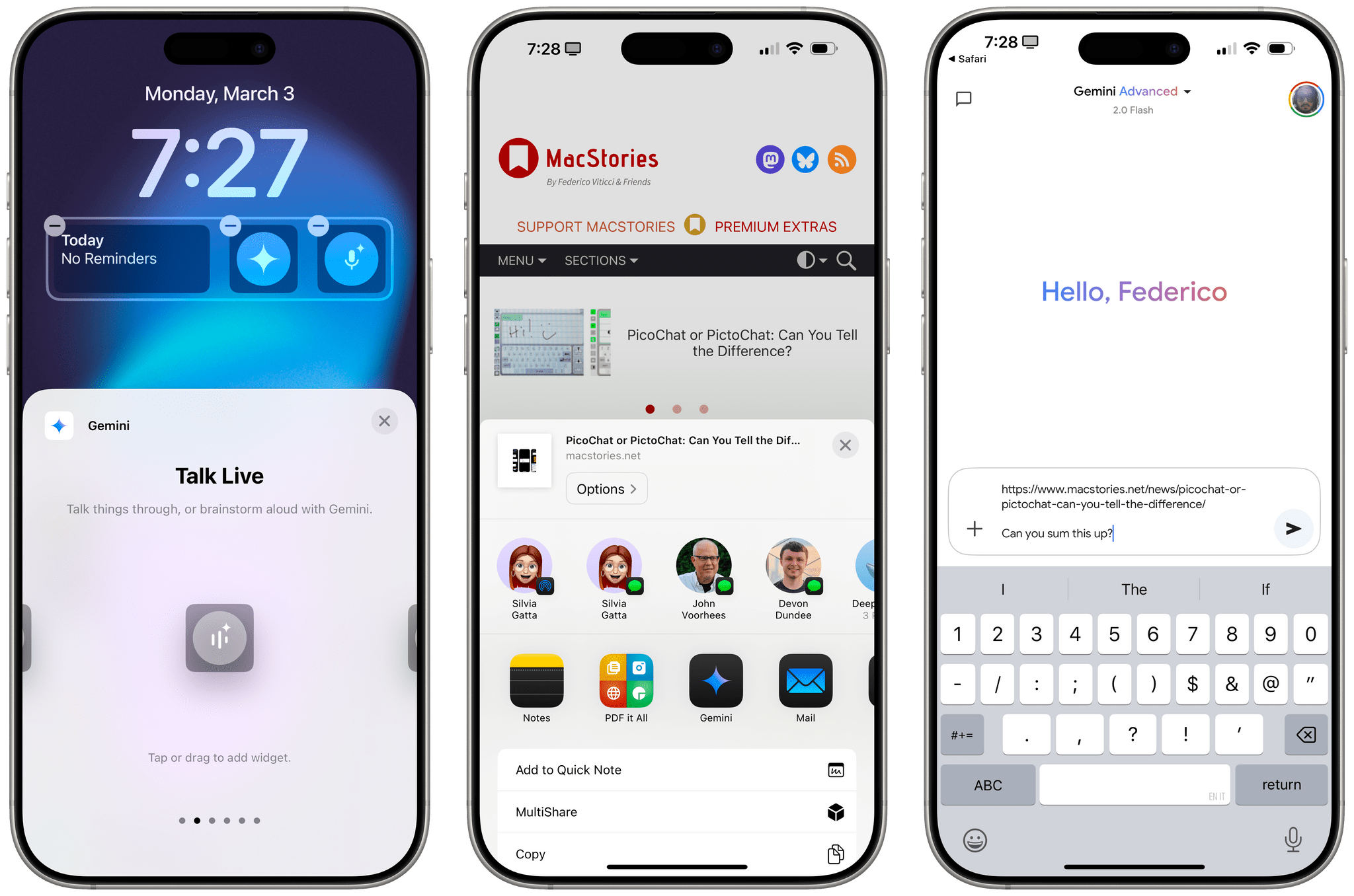

This is an interesting idea by Parker Ortolani: what if Apple allowed users to change their default assistant from Siri to something else?

I do not want to harp on the Siri situation, but I do have one suggestion that I think Apple should listen to. Because I suspect it is going to take quite some time for the company to get the new Siri out the door properly, they should do what was previously unthinkable. That is, open up iOS to third-party assistants. I do not say this lightly. I am one of those folks who does not want iOS to be torn open like Android, but I am willing to sign on when it makes good common sense. Right now it does.

And:

I do not use Gemini as my primary LLM generally, I prefer to use ChatGPT and Claude most of the time for research, coding, and writing. But Gemini has proved to be the best assistant out of them all. So while we wait for Siri to get good, give us the ability to use custom assistants at the system level. It does not have to be available to everyone, heck create a special intent that Google and these companies need to apply for if you want. But these apps with proper system level overlays would be a massive improvement over the existing version of Siri. I do not want to have to launch the app every single time.

As a fan of the progressive opening up of iOS that’s been happening in Europe thanks to our laws, I can only welcome such a proposal – especially when I consider the fact that long-pressing the side button on my expensive phone defaults to an assistant that can’t even tell which month it is. If Apple truly thinks that Siri helps users “find what they need and get things done quickly”, they should create an Assistant API and allow other companies to compete with them. Let iPhone users decide which assistant they prefer in 2025.

Some people may argue that other assistants, unlike Siri, won’t be able to access key features such as sending messages or integrating with core iOS system frameworks. My reply would be: perhaps having a more prominent placement on iOS would actually push third-party companies to integrate with the iOS APIs that do exist. For instance, there is nothing stopping OpenAI from integrating ChatGPT with the Reminders app; they have done exactly that with MapKit, and if they wanted, they could plug into HomeKit, HealthKit, and the dozens of other frameworks available to developers. And for those iOS features that don’t have an API for other companies to support…well, that’s for Apple to fix.

From my perspective, it always goes back to the same idea: I should be able to freely swap out software on my Apple pocket computer just like I can thanks to a safe, established system on my Apple desktop computer. (Arguably, that is also the perspective of, you know, the law in Europe.) Even Google – a company that would have all the reasons not to let people swap the Gemini assistant for anything else – lets folks decide which assistant they want to use on Android. And, as you can imagine, competition there is producing some really interesting results.

I’m convinced that, at this point, a lot of people despise Siri and would simply prefer pressing their assistant button to talk to ChatGPT or Claude – even if that meant losing access to reminders, timers, and whatever it is that Siri can reliably accomplish these days. (I certainly wouldn’t mind putting Claude on my iPhone and leaving Siri on the Watch for timers and HomeKit.) Whether it’s because of superior world knowledge, proper multilingual abilities (something that Siri still doesn’t support!), or longer contextual conversations, hundreds of millions of people have clearly expressed their preference for new types of digital assistance and conversations that go beyond the antiquated skillset of Siri.

If a new version of Siri isn’t going to be ready for some time, and if Apple does indeed want to make the best computers for AI, maybe it’s time to open up that part of iOS in a way that goes beyond the (buggy) ChatGPT integration with Siri.